Analysts are saying "we are cooked" but human behavior has something to add.

The data shows what AI could theoretically do to your career. It says nothing about what humans will choose to hold onto, and that turns out to be the more interesting question.

Everyone seems to have made up their mind about AI and jobs.

Either the robots are coming for everything, and we should panic, or the disruption is overblown, and we should relax.

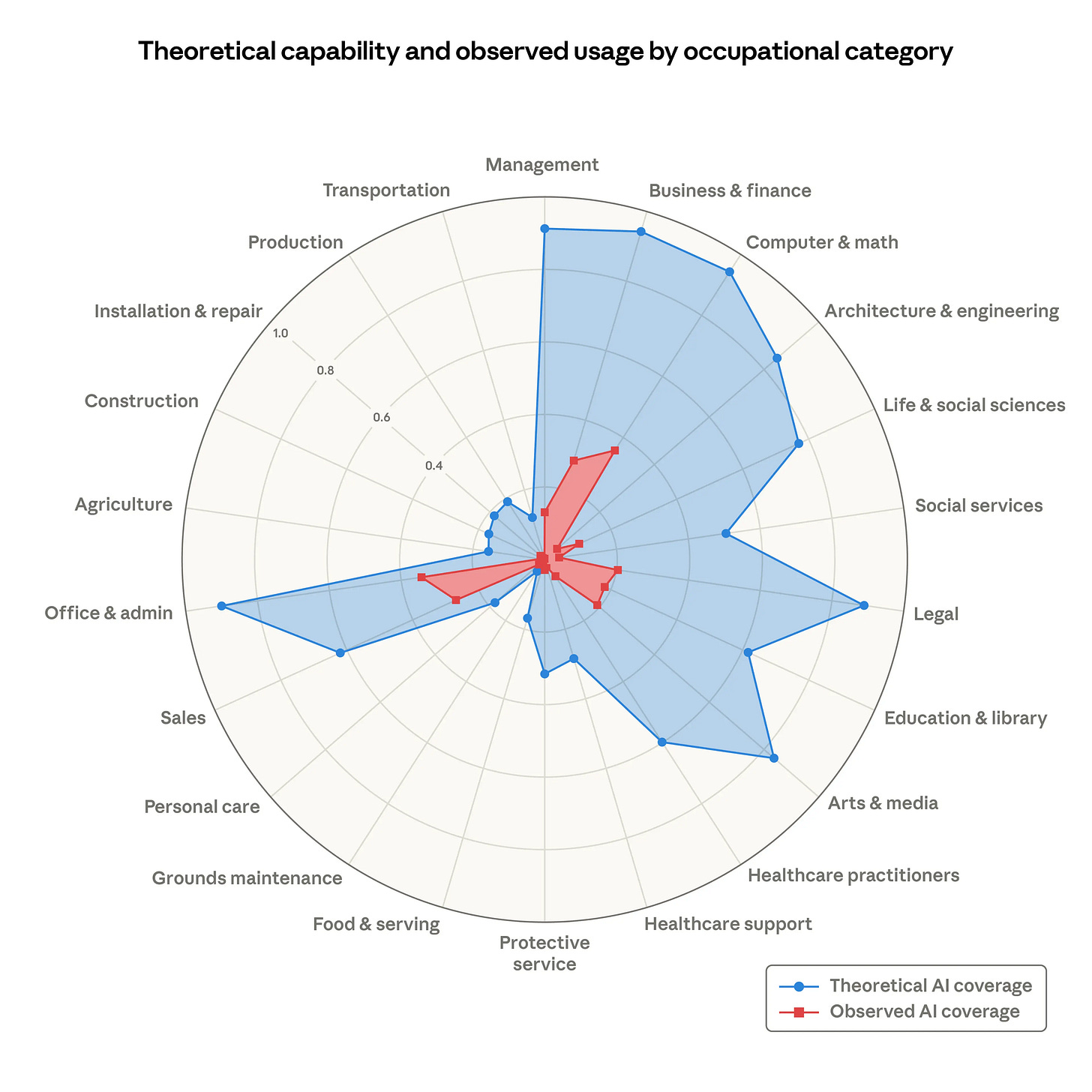

Anthropic published research this week that sits uncomfortably in between those two positions, and I think that discomfort is actually the most useful thing about it. They built something called an "observed exposure index", that measures not just what AI could theoretically do to a given profession, but what people are actually using it for right now in real professional settings.

The gap between those two numbers is enormous, and depending on how you read it, that gap is either deeply reassuring or the most alarming part of the whole picture.

Language models could theoretically speed up 94% of all computer and mathematical tasks. In practice, only 33% of those tasks are currently being handled based on actual usage data. Office and Admin sits at 25% real-world coverage against 90% theoretical. Business and Financial at 20% against 85%. The popular framing is 'we are cooked.' The more accurate framing is that we are somewhere in the early chapters of a long book, and the red will keep growing.

The paper names the scenario everyone in the knowledge economy should be thinking about: a “Great Recession for white-collar workers,” drawing a comparison to the 2007-2009 financial crisis when US unemployment doubled from 5% to 10%. So the alarm isn’t wrong, exactly, but the chart is only telling you what’s technically possible. It says nothing about what people will actually choose.

I wrote something on 84Futures that I keep coming back to in light of this research. The premise was that as automation reshaped the workforce, something unexpected happened: people began longing for the jobs they had left behind. Not for the paycheck or the grind, but for the small, often overlooked human interactions that gave work meaning. Doctors whose value shifted entirely to empathy and presence. In-person sales that came back because trust had become a premium in a world full of deepfakes. Craftspeople whose handmade goods became luxury items precisely because a machine could replicate them perfectly. I wrote it as near-future speculation, but the Anthropic data makes it feel much less fictional.

Workers in the most exposed occupations are more often female, better educated, and earn on average 47% more than the unexposed group. This wave is hitting knowledge workers first, not the trades. The person who graduated with debt and went into finance, legal, or marketing is more exposed than the electrician or the plumber. And yet the research also shows that workers in the most exposed occupations have not become unemployed at meaningfully higher rates than workers in jobs considered AI-proof, at least not yet. The disruption is happening at the entry point, not across the whole workforce simultaneously. Young workers aged 22 to 25 are were being hired less frequently (read: “Companies are reversing course on hiring graduates”) in professions with high AI exposure, suggesting that the technology is already reshaping entry-level employment.

That’s where the data ends, but it’s where the human question picks up, and it’s the one I find more interesting: when AI can do something, does that mean people will let it?

The 84Futures piece argued that nostalgia for human connection isn’t really about resisting technology as the Luddites did in industrial Britain; it’s about wanting to believe that a human was still necessary somewhere in the process. That instinct is going to shape which parts of the blue bars actually become red, and which ones stay theoretical forever because we collectively decide we’d rather pay more for the human version.

Which brings me to a parallel conversation I’ve been watching about a job title Jason Calacanis, one of Uber’s earliest investors, recently named. He called it the “agent maestro”: the person who can take a business process, explain it, and train an agent to execute it. Not a developer, not someone who codes, but someone who understands how a business works and can break that understanding into instructions an agent can follow and then iterate on over time. David Sachs added the broader frame that every company adopting new technology faces a massive change management problem, and the person who can lead that adaptation will have an extraordinary career ahead of them.

At FOMO.ai, we’re living this. The people who have become indispensable on my team aren’t necessarily the ones who understand the technology most deeply. They’re the ones who understand the workflow, can hand it to an agent or the dev team, and then catch where it’s not aligned to their vision. That skill sits right at the intersection of the Anthropic data and the nostalgia thesis: human judgment applied to machine output, which turns out to be exactly what neither a blue bar nor a red bar can fully capture.

Roughly 30% of workers have no AI exposure, including those in fields that require physical presence and that no language model can replicate. For everyone else, the question is whether you end up designing the system, being absorbed by it, or being chosen because of nostalgia. The chart shows you the territory, but what it can’t tell you is how much of that territory humans will actually surrender, and how much they’ll decide, quietly and collectively, they’d rather keep for themselves.

Dax is the Co-Founder & CEO @ FOMO.ai, and the author of 84Futures.com.

👉 Founder or operator? You can now schedule a 1:1 session with me.